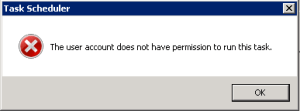

I was encountering a problem with the Windows 7 Task Scheduler. I had a task configured, and it was running correctly at the specified time. But whenever I would open the Task Scheduler and try to run the task manually, it would fail with this error:

I had no idea what this error meant. It could’ve meant that the user account that the task was configured to run under didn’t have permission to run the task — but that didn’t make sense, because the task ran fine at its scheduled time. It could’ve meant that the user account I was using didn’t have permission to run the task — but I was running as a system admin. I spent a while searching Google, and while I found people talking about the error, I couldn’t find any useful information about what it meant or how to fix it. Finally, I whipped out my old friend ProcMon, which helped me see what was happening:

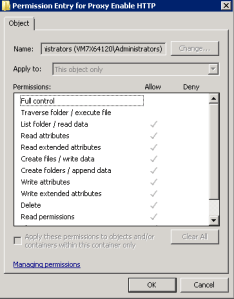

The Windows 7 Task Scheduler stores tasks as individual XML files in the directory C:\Windows\System32\Tasks. This task in particular had been created by a script using the Schtasks.exe utility. The way Schtasks had configured the permission on the task file was very strange — it had granted the Administrators group all permissions except Execute:

Oddly enough, you need to have Execute permission on the task file in order to run it. This can be edited through the Windows UI, or from the command line by running:

cacls "C:\Windows\System32\Tasks\Task Name" /g Administrators:F

Naturally, you’ll need to replace “Task Name” with the actual name of the task, and Administrators with the user or group to grant access.